Stop re-explaining yourself to your AI.

MythOS is the shared memory between you and every AI you use. Capture what you read. Connect what you think. Publish what matters — across every model, every agent, every channel.

Free to start · No credit card required

Don't take our word for it. Ask .

This is the founder's actual MythOS library. Ask it anything — your library will do this for your readers.

Answers are grounded in real memos, with citations. No signup required to try.

Your library, one click from every AI.

You already have a memory. It's just scattered across Notion pages, voice memos, half-written drafts, and every AI chat you've ever had.

Write once. Remember forever.

Markdown editor with

@mentions

,

#hashtags

, collapsible headings, and images that paste in like email. Your memos link to each other automatically. Your library builds itself.

Build your library with your AI, not around it.

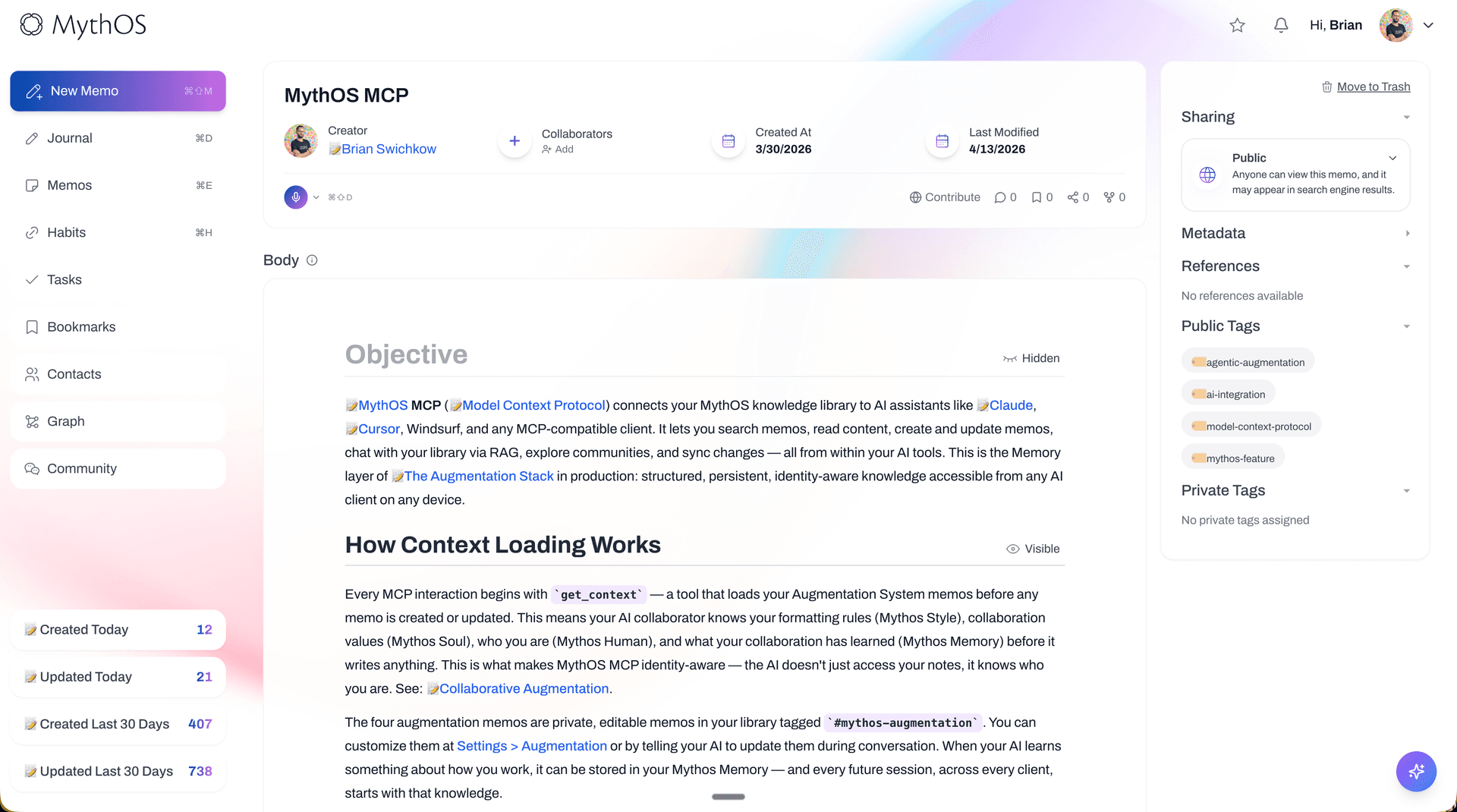

MythOS is designed to be co-authored. Ask Claude to research a topic — it writes the memo and files it for you. Use Cursor, Windsurf, or any MCP-native app, and your library follows. Every conversation ends with something permanent.

Connect via MCP →One library. Every workflow.

Drop a memo into any automation. Zapier reads from MythOS. Your n8n flow pulls your notes before firing. Your agent loads your library as context before replying. Your memory becomes the single source of truth the rest of your stack can actually reference.

See integrations →Talk to what you've written.

Every memo becomes searchable by meaning, not just keywords. Ask your library questions. Get answers grounded in your own words, with citations to the exact memo where you wrote them.

Try the chat →Your library, out loud.

Publish any memo with one toggle. Readers get your prose, your mentions, your tags, and a chat panel that speaks in your voice — grounded in your own memos. Every memo is a live page. Every library is a live conversation.

See a public memo →Notes don't think. Networks do.

A #memory system that survives every model migration, grounded in @the-portable-context doctrine. Ideas become nodes; nodes become navigable.

One source. Three channels.

Turn any public memo into a discussion space — upvote, comment, fork. Send it as a newsletter issue to your subscribers. Get surfaced in search — Google and every AI indexer, thanks to JSON-LD and

/llms.txt

. Your audience doesn't come to the platform; the platform goes to them.

Using Claude, ChatGPT, or Cursor? Plug them in.

One MCP server URL connects your library to every AI you already use. About 60 seconds, no code.

Addressable by every AI you'll ever use.

MythOS publishes an

llms.txt

, serves an agent-scoped API, and speaks OpenAPI. Your Claude reads your library. Your ChatGPT reads your library. Your next model, trained next year, reads your library. Your memory doesn't die when the AI does.

Simple tiers. Yours forever.

Three tiers, no surprises. Export everything anytime.

- Unlimited memos + daily journal

- Media uploads (5MB/file, 500MB total)

- Voice transcription

- Custom feed and memo URLs

- Everything in Scribe

- Public memo publishing

- Unlimited collaborators

- Agent API + MCP + /llms.txt

- Email ingestion

- Everything in Scholar

- Custom domain

- Community spaces + moderation

- AI agents trained on your library

- Adaptive AI as a Service

Before you ask.

Is this related to Anthropic's Claude "MythOS"?—

No. We've been MythOS since 2017 — years before Anthropic existed. If anything, it's the other way around.

What if I want to leave?—

Export everything as markdown anytime, including media. Your library is yours; we're just hosting it.

Do I need to know markdown?—

No. The editor works like any rich-text tool — bold, lists, checkboxes, images. Markdown is what we save underneath so it stays portable forever.

What AIs does this work with?—

Claude, ChatGPT, Gemini, Grok, Perplexity — anything that can speak HTTP or MCP. We publish an llms.txt and an agent-scoped API. Your next model will work too.

Can I bring my own AI key?—

Yes. Free and Pro both support BYO keys for OpenAI or Anthropic. Pro also includes hosted AI if you'd rather not manage keys.

Is my library private by default?—

Yes. Every memo starts private. You choose what to make public, unlisted, or shared with specific contacts via permission tags.

Not ready yet? Take the ideas with you.

Occasional letters on building a memory your AI can actually use. No spam, unsubscribe anytime.